Best Practice

Create private VPC subnets in different Availability Zones reserved for EKS network interface and future cluster upgrades.

Why need this

Security:

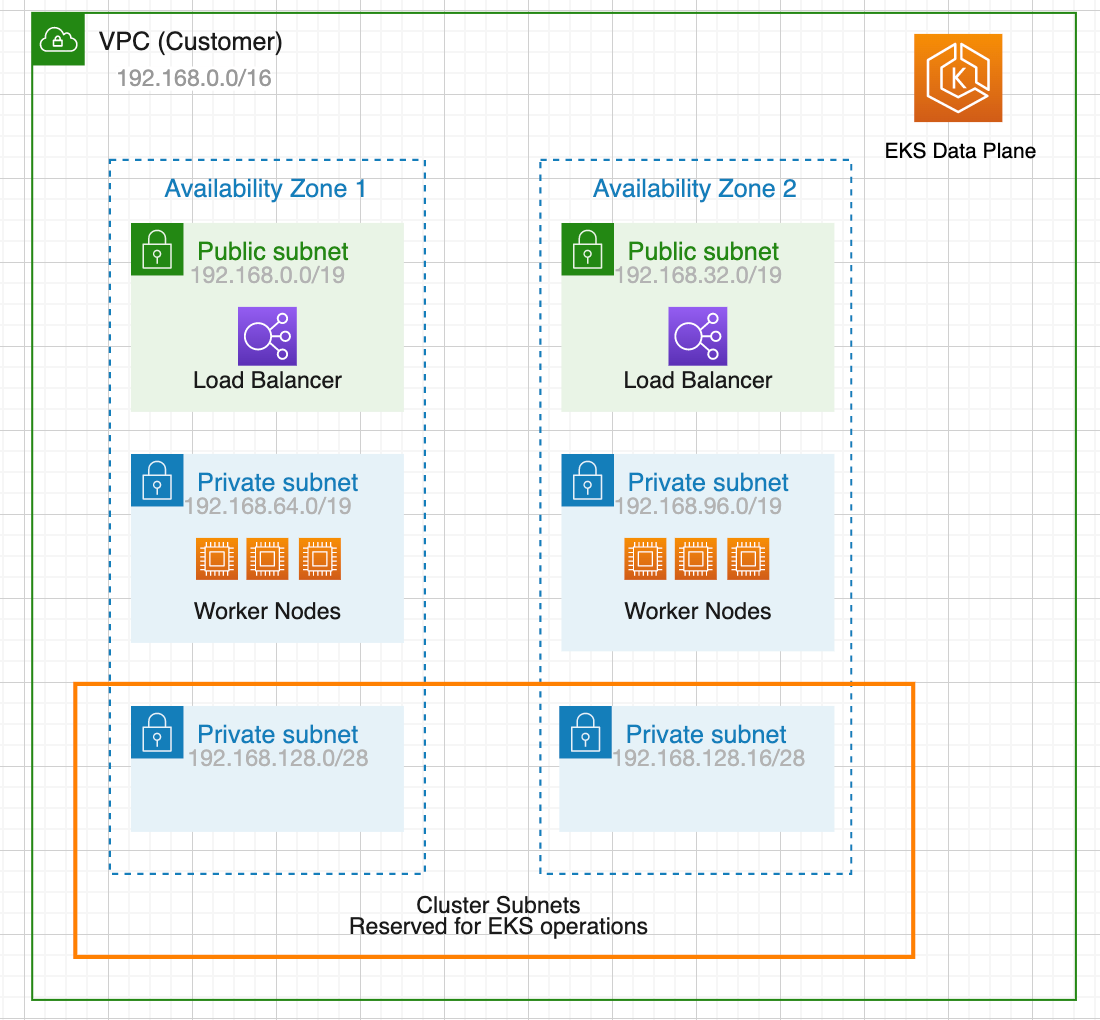

When creating EKS clusters, we will need to specify a VPC and at least two subnets in different Availability Zones. The subnets we specified when creating the cluster are called cluster subnets. Worker nodes are not recommended to be placed inside cluster subnets. The cluster subnets are “reserved” for EKS operations.

Maintainability:

When we upgrade our cluster, Amazon EKS requires up to five free IP addresses from the subnets that we specified when we created our cluster. Amazon EKS creates new cluster elastic network interfaces (network interfaces) in any of the subnets that we specified. The network interfaces may be created in different subnets other than our existing network interfaces are in.

If we don’t follow this best practice and specify large subnets or even specify all subnets during cluster creation, we are putting our cluster in a situation that cluster upgrade may fail in the future. If the cluster subnets are too large, it will take a lot of IPs and would be a waste of resources. When we run out of available IP addresses, we may need to deploy nodes in cluster subnets. If there’s not enough free IP addresses in the subnet and EKS randomly choose that subnet to deploy the new ENI during upgrading, the upgrade will fail.

ASCENDING ApproachThe Ascending approach is to use 2 /28 subnets in different Availability Zones as cluster subnets and create at least 2 public and 2 private subnets for workload separately. Usually, a /19 CIDR is good for worker subnets. The worker nodes would be deployed in private subnets to have maximal control over traffic to the nodes and this would be effective for the vast majority of Kubernetes applications. Ingress resources (like load balancers) would be instantiated in public subnets and route traffic to Pods operating on private subnets.

Daoqi

DevOps Engineer @ASCENDING

Celeste Shao

Data Engineer @ASCENDING